Go to Work Wasted

You gotta go to work high if you're getting paid low

You gotta put your nose to the grindstone stoned

That elbow grease will get you fucked

You don't wanna be a jerk or schmuck

— NOFX, "Go to Work Wasted"

Mark,

Have you seen the dashboards yet?

Token usage per engineer. "AI utilization metrics." Slack threads from VPs

asking why Dave only generated 40k tokens last sprint when the team average is

120k. Pair programming mandates, but now with a machine, and the machine keeps

a log.

The costume is new. The impulse is not.

The Same Song, A New Costume

I killed standup because it was surveillance disguised as coordination.

Management replaces trust with measurement, and whatever we name the

measurement — "alignment," "visibility," "accountability" — the name doesn't

matter. The grip does.

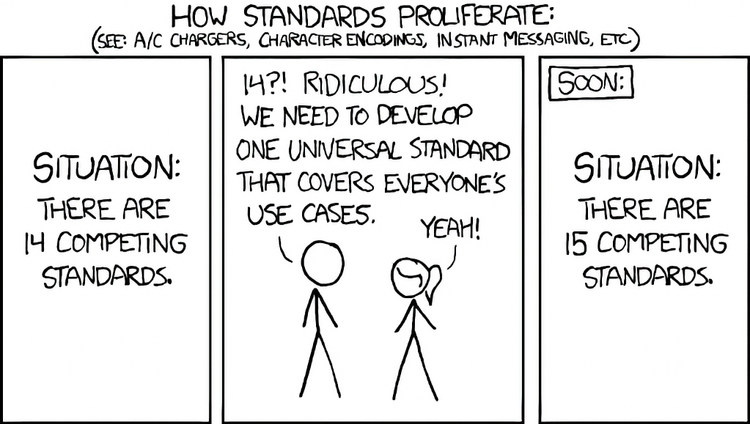

The tools change. Standup became Jira became burndown became velocity became

acceptance rate became tokens. Each generation of the tool is sold as the one

that will finally give management the visibility they deserve. Each generation

produces the same thing: performative behavior, exhausted engineers, and

meetings about why the metric isn't telling us what we need.

Now it's AI. And it's the same play.

"Everyone must use the company approved agentic coding platform." "Every PR

must show evidence of AI-assisted authorship." "Team X's token count is lagging

— please address in your 1:1s." All three are in circulation right now.

Different companies. Same playbook.

The mandate is the problem. Not the tool being mandated.

The Mandate is the Problem

Here's what mandates do. They take a behavior that some people might, under the

right conditions, choose, and they turn it into a compliance check.

The engineer who was using Copilot because it made their Tuesday tedious

refactor faster? They keep doing that. The engineer who wasn't? Now they use it

to write comments they would have written anyway and call it augmented

productivity. They use it to generate the boilerplate they would have copied

from a neighboring file and call it AI-assisted development.

Nothing changed. Except now there's a meter.

And the meter does not measure what you think it measures. It measures that the

mandate is producing observable activity. It cannot measure whether any of the

activity is good, whether anyone learned anything, whether the code is better,

whether the engineer understands what they shipped. It measures the grinding.

It cannot measure whether anything useful emerges from the grind.

This is the NOFX problem. You can mandate that people show up. You can mandate

that they clock tokens. You can mandate nose-to-the-grindstone. You cannot

mandate the spirit behind any of it. People find their own way to survive the

grind. The mandate guarantees only the grind.

Industrializing Humanist Principles

Every generation of management consultant discovers some humanist principle

that works, strips it of its human context, industrializes it into a mandate,

and then acts confused when it stops working.

Pair programming came out of XP. It was a practice people chose because two

people thinking together on a hard problem often produced better work than one

person thinking alone. It required trust. It required two people who wanted to

be in the room together. It required that neither of them was being surveilled.

Then it got mandated. Two engineers assigned to a pair. One is senior, one is

junior, and the assignment is really "senior person teaches junior person while

we get credit for mentorship." Or both are senior and the assignment is really

"we don't trust either of you to write this alone." The practice that required

trust became a tool for expressing distrust.

Standup, same story. Started as a self-organizing team taking five minutes to

figure out what they're each doing. Became a status meeting where everyone

performs productivity for a manager. Became a daily affirmation of the power

imbalance.

Retrospectives, same story. Started as a space for the team to reflect

honestly. Became a structured meeting with a facilitator and sticky notes and

an action item tracker that makes sure nobody says anything dangerous.

Now AI. The tool was interesting when people picked it up because they found it

useful. The moment it becomes a mandate, it becomes another thing to perform

around.

The pattern is always the same. Someone finds a thing that works when chosen.

Management cannot tolerate "when chosen." The only thing management can mandate

is the shell. The shell is not the thing. The shell never was.

The Meter Measures the Meter

There's a specific failure mode with measurement that I want to name for you,

Mark, because it gets worse the more sophisticated the metric becomes.

Token count is an especially clean example. It is completely unrelated to

output. An engineer could generate 500k tokens in a sprint writing code that

gets reverted next week. An engineer could generate 20k tokens shipping the

most valuable feature the team produced that quarter. The token count tells you

neither.

What the token count tells you is whether the engineer is performing the ritual

of AI use. That's it. The metric has collapsed down to measuring its own

enforcement.

And engineers know this. Engineers always know this. The ones who want to keep

their jobs find ways to get their token count up. The ones who don't want to

perform leave. The metric converges, over time, to the average token count of

people willing to perform for the metric. It tells you nothing about whether

anything valuable is being built.

Goodhart's Law in an AI costume. I wrote about this in week 14. When a measure

becomes a target, it ceases to be a good measure. The mandate turns the measure

into a target the moment it's attached to someone's 1:1.

What Actually Works

Here's what I've seen work, for what it's worth.

I gave a voluntary demo several weeks ago. Showed the team my personal

Claude-based knowledge management system. Nobody had to come. Nobody had to

adopt it. I explained what it was, what it did for me, and what its limits

were.

Three people asked me follow-up questions in the weeks after. One built their

own version for a completely different use case. One said it wasn't for them

but wanted to know how I was thinking about prompt design. One just wanted to

complain about coworkers using AI in ways that made their code worse, and

didn't know what to do about it.

Three conversations. Zero tokens tracked. Zero mandates issued.

That's the thing mandates can't produce. The wanting to. The reaching toward

a tool because it solves a problem you actually have. The quiet decision to try

a thing because someone you trust said it helped them. You can't mandate that

into existence. You can only build the conditions where it becomes possible.

You don't need a mandate. You need engineers who'll show each other what they

found because they want to. You don't need a token dashboard. You need a 1:1

that asks "what did you learn?" instead of "why is your number low?" You don't

need to mandate adoption. You need to make "no thanks, not for this" a sentence

somebody can say without hurting their performance review.

None of that is sexy. None of it gives a VP something to demonstrate their own

value to the company. But it's the thing that actually produces what the

mandate is pretending to produce.

For Mark

You're on the other side of this from me. You have an org that wants metrics.

You have VPs who want dashboards. You have a board that wants to know whether

the AI investment is paying off. I'm not pretending those pressures don't

exist. I know they do.

But the answer to "is our AI investment paying off" is not "token count." The

answer is "did we ship better software faster, with engineers who understood

what they shipped and would do it the same way again." That's a qualitative

answer. It requires trust. It requires conversation. It requires managers who

can tell the difference between activity and work.

I don't have a clean tool to give you for that. I wish I did. What I have is

the negative lesson — every mandate we've seen industrialized has destroyed the

thing it was trying to capture. Pair programming. Standup. Retrospectives. Now

AI. Same play, same failure, different costume.

Engineers will find a way to survive, they always do. But will they go to work

wasted? Will they put their nose to the grindstone stoned? The metric will

climb, but how will the code get any better?

Today is 4/20. Somewhere an engineer is generating tokens to hit quota and

thinking about the joint they're going to smoke after work to feel like a human

again. The mandate produced both of those things simultaneously. It's the same

system.

Build the conditions. Don't mandate the behavior.

Member discussion